The Fiber Exchange Redefining Global Network Strategy

Connecting Cable Landing Stations Is the Future of Global Network Strategy

The cable landing station is no longer a passive endpoint. It has become the defining infrastructure node of the AI era — and the carriers who recognize this are building tomorrow's network architectures today.

From access point to infrastructure core

In today's hyper-connected world, the role of the cable landing station is transforming at a pace the industry has never seen before. No longer simply an access point for undersea cables, the CLS is emerging as a critical hub that networks increasingly rely on for primary connectivity — and now, for AI-era compute delivered at the network edge.

Recent industry analyses reinforce this shift, underscoring how direct interconnection at CLSs drives lower latency, enhanced resiliency, and cost efficiency. At NJFX, we have always been forward-thinking. As the first carrier-neutral CLS and colocation facility in the U.S., we've been empowering companies with direct, secure access to major subsea cables spanning North America, Europe, South America, and the Caribbean.

That model is now accelerating dramatically. Networks are beginning to leverage CLSs as their primary point of interconnection — while still using other Points of Presence to ensure network diversity and redundancy. And the newest wave of demand isn't purely connectivity. It's compute, inference, and AI.

The cable landing station is no longer just a network access point — it's becoming the infrastructure core for tomorrow's digital economy.

Gil Santaliz, CEO, NJFXA fundamental shift — confirmed by 2025–2026 developments

The trajectory we've long championed at NJFX is now being validated at a global scale. Data Center Dynamics calls this the era of "dynamic scalability, strategic redundancy, and adaptive infrastructure" — where CLSs evolve from interconnection points into foundational nodes of integrated global network infrastructure.

The industry is also witnessing a decisive move toward open, neutral CLS models. Traditionally, single-operator control of a landing station created barriers and bottlenecks. That model is giving way to carrier-neutral facilities that allow multiple network providers to share infrastructure, fostering competition, reducing costs, and enabling far more dynamic interconnection options.

The hybrid CLS emerges

The most transformative development of 2025 is the rise of the hybrid cable landing station — facilities that integrate subsea connectivity with AI processing capabilities, enabling localized compute power right at network entry points. This evolution supports the surging demand for distributed inference deployments, moving beyond traditional hyperscaler-only models.

Hybrid CLSs reduce the need for long-haul data transport, cutting latency for AI-driven applications while optimizing bandwidth utilization. This is exactly the model NJFX has spent a decade building toward — and why our campus in Wall, NJ is exceptionally well-positioned for what comes next.

Exceeding $900B by 2029 — driving massive new demand for subsea edge infrastructure.

IDC Worldwide Quarterly AI Infrastructure Tracker.

Recent milestones powering the future

The last eighteen months have brought a series of landmark developments to our campus — each a concrete expression of the CLS-as-infrastructure-core thesis playing out in real time.

Leading European bank joins the NJFX ecosystem

A major multinational institution with a presence spanning 65 countries selected NJFX to enhance cloud connectivity and explore advanced AI applications.

Financial ServicesBoldyn deploys Ciena WaveLogic 6 at NJFX

Delivering wavelength services scalable to 1.6 Tb/s — the bandwidth and optical networking capabilities essential for AI-intensive workloads at the subsea edge.

AI InfrastructureColt connects Apollo South to NJFX

Colt Technology Services expanded global fiber reach directly into New Jersey, ~3 miles from the Apollo South cable landing station — new standards for network resilience.

ConnectivityNJFX celebrates 10 Years / 10 MW

A decade as North America's first carrier-neutral CLS campus, marked by reaching 10 MW of dedicated power capacity — purpose-built for the AI infrastructure era.

Anniversary MilestoneHyperscalers are betting on the subsea edge

The largest technology companies in the world are making enormous bets that validate the CLS-centric network thesis. Google's America-India Connect initiative — part of a $15 billion AI infrastructure investment through 2030 — is connecting four continents through subsea cable infrastructure, with open cable landing stations positioned as strategic hubs for a new global data corridor.

This is the exact architecture NJFX pioneered on the U.S. East Coast: multiple independent subsea systems, diverse terrestrial backhaul, and a carrier-neutral environment where global and domestic networks interconnect freely — without recurring cross-connect fees, without backhaul bottlenecks, and without single points of failure.

As data volumes skyrocket and real-time AI applications become the norm, what was once a forward-looking thesis has become urgent infrastructure policy. The network industry's center of gravity is moving to the edge — to where the cables land.

Direct interconnection at cable landing stations represents more than just a trend. It is a fundamental shift in network architecture and strategy.

NJFX, Original PublicationWhy direct CLS interconnection wins

The strategic benefits of connecting directly at the cable landing station — rather than backhauling through legacy carrier hotels in NYC, Ashburn, or Miami — are clearer than ever in 2026.

Lower latency for AI workloads

Proximity to diverse network nodes minimizes delays — mission-critical as AI inference applications require sub-millisecond response times at scale.

Improved network resilience

Direct CLS interconnection combined with diversified PoPs ensures robust continuity — eliminating single points of failure that plague legacy city-center routes.

Direct global market access

Immediate connectivity to five subsea cables spans North America, Europe, South America, and the Caribbean — from a single carrier-neutral campus.

Cost-effective scalability

Reducing backhauling and eliminating recurring cross-connect fees translates directly into economic benefits for expanding network infrastructure at any scale.

A decade of building toward this moment

NJFX opens North America's first carrier-neutral CLS campus

Wall Township, NJ — the first colocation facility in the U.S. designed specifically at a cable landing station, with carrier-neutral access from day one.

First-ever CLS-to-CLS terrestrial connection

Windstream connected NJFX to Telxius at Virginia Beach — the first carrier-neutral CLS-to-CLS route on the U.S. East Coast, with access to over 500 Tbps of combined subsea capacity.

NJFX acquires Subcom bore pipe assets

NJFX acquired Subcom's bore pipe assets, securing ownership of our front-haul systems. This milestone delivers greater resilience and trust for our customers with direct control over critical last-mile infrastructure.

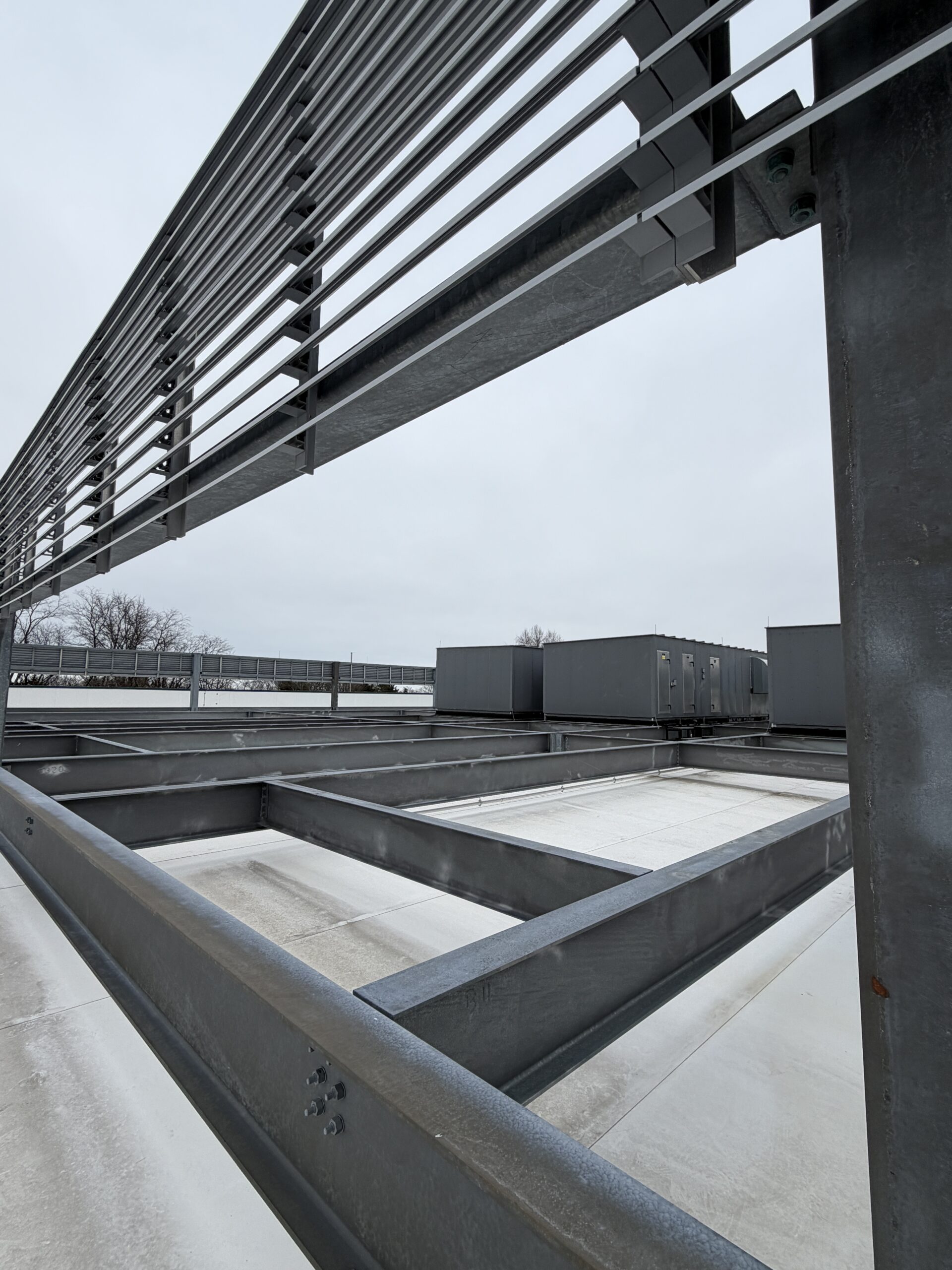

AI-ready infrastructure buildout

10 MW water-cooled data hall. Ciena WaveLogic 6 at 1.6 Tb/s. European bank and Colt partnerships. Fifth subsea cable connected. NJFX positioned as a hybrid CLS for distributed AI inference.

10 Years / 10 MW — the AI infrastructure decade

A decade of carrier-neutral CLS leadership and a 10 MW power milestone — at the exact moment a global AI infrastructure boom puts the subsea edge at the center of digital economy strategy.

The CLS is the infrastructure of the AI decade

As data volumes skyrocket and real-time applications become the norm, the network industry is undergoing rapid — and irreversible — evolution. Direct interconnection at cable landing stations is a fundamental shift in how global network infrastructure is designed, deployed, and operated.

The next generation of CLS facilities will integrate AI processing with subsea connectivity, serving as anchor points for distributed inference at a global scale. They will reward the carriers and enterprises who chose to co-locate here early — bypassing legacy chokepoints in favor of a more direct, resilient, and economically sound model.

NJFX leads this transformation. Our campus in Wall, NJ — 64 feet above sea level, Category 5 hurricane-resistant, DHS-designated Protected Critical Infrastructure — is where the subsea edge meets the terrestrial backbone, and where the AI infrastructure decade is being built, one connection at a time.

NJFX Editorial Team

Published by NJFX — North America's first carrier-neutral Cable Landing Station campus, Wall Township, New Jersey. Updated April 2026.

The Fiber Exchange Redefining Global Network Strategy Read More »